Copyright Notice: This article is Copyright AI Factory Ltd. Ideas and code belonging to AI Factory may only be used with the direct written permission of AI Factory Ltd.

A recent success at AI Factory has been our Tutor system created for the Japanese game of Shogi. This came into being from a need to provide a help system for our Shotest Shogi product and so a purpose-designed interpreted teaching language was designed. Once in place this rapidly evolved into a remarkably powerful tool for Shogi teaching, becoming a central important feature of the product.

AI Factory already has a Pool and Snooker program with a powerful AI engine and the ability to play Pool and Snooker with a human-like style. In evolving this into a next generation product we elected to create a new teaching system for Pool/Snooker. This article describes the results.

Design Goals

For Shogi the basis of the tutorial was to have pre-prepared scripts to allow the 3D GUI to play through examples and test the user. The script not only verified correction test responses but also was able to respond to incorrect responses. By having this script-based, it was possible to have the tutorial created externally without needing any changes to the core product. A script could be updated using notepad and tested immediately.

The idea of going on to providing teaching with Pool was attractive, but this has been on a rather different basis. Rather than scripted tests, we elected to have the AI create the tests for the user to try. The format for this would be having the user play against the tutor, and when it came to the user's turn, the AI tutor would select a shot for the user to try. The user would be allowed multiple attempts at the shot and could also elect (after 3 attempts) to have the AI Tutor demonstrate how to play the shot. The play was then balanced by the AI Tutor so that the game progressed in a normal fashion, but where the tutor would deliberately miss shots if it was ahead and make pots if it was behind.

Pitfalls!

Creation of an intelligent Pool / Snooker game showed up some curious attributes. Historically an attempt to apply AI to a problem often results in an initial comical poor performance that gradually improves with refinement and eventually catches up with human AI. With Pool the opposite is true. Our first version that could play Pool at all, played a blinding game! For a start it potted on the break and basically then cleared up the table. Therefore beating the AI required you to play first and also get a pot on the break. A Pool product launched with these attributes is not going to be popular!

Of course the AI has the huge advantage that it can exactly determine the consequences of its play. It essentially has the perfect aiming cue and never misses. In consequence the AI selected fantasy shots that potted 4 balls at a time. A simple fix for this is, of course, to introduce random errors. However this is not enough. The Pool engine will still choose the fantastic 4-pot shot, but then just miss. Such shots look incomprehensible; the intended object of the shot is probably not obvious.

The real problem to be solved is to be able to have the AI play shots that take percentage play into account. Added to that the AI needs to penalise making shots that humans cannot easily judge. For example the AI is just as happy playing a direct pot as it is with a shot that hits multiple rails first. As far as the AI is concerned, the shot is just as easy, but not so for humans, who cannot judge such shots so easily.

The consequence is that the Pool/Snooker AI needs to try and emulate human frailty in order to play with a style that is satisfying and makes the user feel that they have a human-like opponent.

Our Pool /Snooker did a pretty good job at that, and as a GameSpot review of Friday Night 3D Pool observed, it provided a play style which equated to human play and offering a range of believable opponent play styles.

Getting the Tutor to Choose Shots

This proved harder than expected. Of course in "tutor" mode the AI would have a bias to pick shots that were simpler, but in practice the early results were less than expected. Although the AI played with a credible style head-to-head, when selecting shots for the user to try it was clear that it would often pick a shot that was not the best option to try. Potting shots were generally ok, but the safety shots chosen were often not seemingly as good as the apparent AI safety play across the table. A reason for this is that when the AI plays, it takes its shot and you do not always have a chance to critically assess it. Once taken you do not have the advantage of assessing it to the situation that the shot was taken as you cannot compare with the position before the shot.

In consequence the choice of shot to play by the tutor exposes the choice to full scrutiny. The solution needed was that the AI had to be taken to the next level, with more solid and believable safety play. Once the tutor has chosen the shot to play to test the user, it really has to be a good choice of shot!

A substantial benefit of this added effort into the AI has been a significant enhancement of the general play style that the AI provides.

Information presented by the Tutor

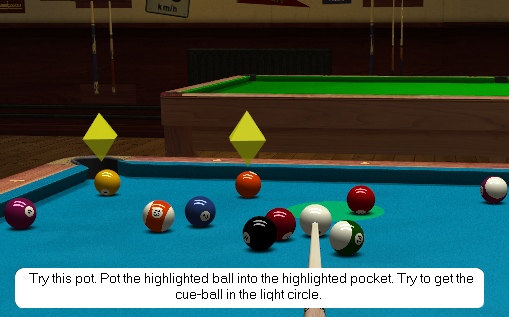

Presenting information from the tutor required some thought. Specifying a pot is not so hard, but actually you need to indicate where the cueball would ideally be left after the pot, so that a follow-on pot was possible. This cannot be on a pin head, but then again how much tolerance will there be? The solution was to compare the intended shot with the same shot played with marginally less strength. The ideal cueball position was the target, but with a radius corresponding to the distance between the cueball for the two tested shots. In the screenshot above you can see this "cueball" area highlighted by a green circle.

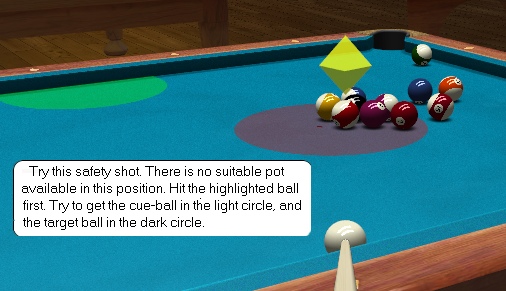

With a safety shot, you will need to have a target for both the object ball and cueball. Both are key to understanding the shot to be played. In the screenshot above the user has to position both balls.

Choosing between Potting and Safety

Of course the AI Tutor can almost always find a pot to play, but it needs to stop setting impossible pots. To achieve that the Tutor evaluates the "easiness" of a pot, and if it looks too difficult, it instead searches for a safety play. This allows a natural graduation into multiple levels of tutor sessions. At a low level, the user is only presented with easy pots, whereas the higher level will offer more ambitious difficult shots. The player can therefore evolve as they learn, switching to more advanced tutorials as they get better.

Having the Tutor Guide the Player

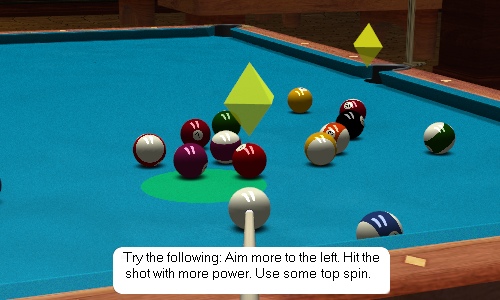

A natural addition to this setting of tests is to have the AI Tutor also offer advice. In the screenshot below the player has just missed a pot, so the AI suggests how they may improve their shot to more closely achieve the best shot.

Conclusions

The system above is still being evolved, but is offering something quite new. It is not perfect and sometimes it will choose a non-optimum shot to play. However it generally works well and provides a tutorial system with endless variety. Learning with the AI tutor is not repetitious as each lesson is different. This provides a teaching system which also feels like you have a real tutor there; not a fixed script created by some unknown remote tutor, but a tutor who sets tasks in response to your actual play across the table.

This has been a very worthwhile project and has contributed significantly to our recent theme of creating both AI and teaching systems.

Jeff Rollason and Daniel Orme: July 2008